7 Effective Strategies to Improve Brand Visibility in AI Search Engines

7 Effective Strategies to Improve Brand Visibility in AI Search Engines

The New Era of Discovery: Beyond the Ten Blue Links

The digital landscape is currently navigating its most profound tectonic shift since the commercialization of the internet. For decades, the "Ten Blue Links" served as the undisputed gatekeepers of human knowledge and consumer commerce. If you owned the first page of Google, you owned the market. But the walls of that garden are coming down. We have transitioned from the era of search into the era of synthesized discovery.

The data points to a reality that most marketers are only beginning to grasp. By 2027, AI search channels are projected to drive as much business value as traditional organic search, and they are expected to surpass it shortly thereafter. This isn't a distant "future-tech" prediction; it is an immediate market transition. Consider the current scale: ChatGPT has surged to over 900 million weekly users, while Google AI Overviews now appear in a staggering 88% of informational search queries.

Here is the hard truth for every search professional: Being #1 on Google no longer guarantees you a seat at the table. Your brand can hold the top organic spot for a high-value keyword and still remain entirely invisible in the AI-generated answer that occupies the most valuable real estate on the screen. To survive this transition, you must master how to improve brand visibility in AI search engines. This requires moving beyond traditional ranking signals and embracing a framework designed for the "agentic web," where Large Language Models (LLMs) act as digital concierges, synthesizing, recommending, and eventually performing tasks on behalf of the user.

Defining the Landscape: AI SEO vs. Traditional Search

To navigate this new world, we must move past the buzzwords and understand the specific strategic levers at our disposal. While "search" as a concept remains, the mechanics of visibility have bifurcated into three distinct but related domains.

AI SEO: This is the tactical practice of optimizing content so it appears as a verified citation or direct mention within AI-powered responses. If traditional SEO was about "ranking," AI SEO is about "inclusion."

AI Visibility: This is your brand's share of voice across the LLM ecosystem. It is a measurement of how frequently your brand is recommended or cited across platforms like ChatGPT, Perplexity, and Gemini compared to your competitors.

Generative Engine Optimization (GEO): This is the holistic strategy of becoming a foundational part of the final synthesized output. GEO focuses on Agentic Search, a process where the AI doesn't just provide a list of sources but retrieves, analyzes, and presents a definitive answer to a user’s query or even completes a transaction (Agentic Commerce).

The Platform Divergence

The AI search landscape is not a monolith. Each platform utilizes different retrieval mechanisms, which leads to a massive divergence in what content gets cited. Our research shows a startling lack of overlap: only 2.1% of ChatGPT citations match the URLs found on the first page of Google. Meanwhile, Perplexity shows a 32% overlap, and Google AI Mode sits at 15.5%. This means a "Google-only" strategy is a recipe for invisibility everywhere else.

The Value Gap

Why invest so heavily in these platforms? It comes down to intent and conversion. Semrush research estimates that AI search visitors convert 4.4x better than traditional search visitors. These users aren't just "browsing"; they are using AI to refine their options, compare specific features, and validate their final decisions. When an AI recommends your brand, it carries the weight of a synthesized consensus, making the lead significantly warmer than a standard organic click.

Strategy 1: Front-Load Content with Direct, Extractable Answers

AI systems are "answer engines" before they are search engines. They are designed to minimize the effort a user spends finding information. To be the source they choose, your content must adopt an "Answer-First" methodology.

This aligns perfectly with Microsoft’s official guidelines for generative search, which emphasize that content must answer real questions directly without requiring "interpretation" by the bot. If an AI has to dig through 1,000 words of preamble to find your point, it will simply move on to a competitor who was more concise.

The Answer-First Execution Pattern

To maximize your extraction potential, apply this 4-step pattern to every H2 and H3 section of your high-value pages:

Open with the Core Definition: Start every section with a 40–60 word direct response to the heading. This is the "sweet spot" for extraction into featured snippets and AI overviews.

Terminology Alignment: Use the exact terminology from the heading in your opening sentence. If your H2 is "What is Agentic Search?", your first sentence should be "Agentic Search is..." rather than "This new concept involves..."

The Two-Sentence Rule: Keep your core definition to a maximum of two sentences. This makes the "chunk" of information easy for an LLM to identify as the primary answer.

Contextual Detail: Use the remainder of the section to provide the "why," the data, or the examples.

Strategist's Pro-Tip: Think of your content as a series of standalone modules. If an AI pulled a single paragraph from your page and presented it to a user, would that paragraph make complete sense without the rest of the article? If the answer is no, you are failing the extraction test.

Strategy 2: Optimize Technical Foundation and Crawlability

Visibility in the AI era begins with machine access. If an AI crawler, whether it’s GPTBot, PerplexityBot, or Common Crawl, encounters friction on your site, your brand effectively does not exist in their training data or real-time retrieval.

The Problem with JavaScript

One of the most critical technical hurdles today is the "Render Budget." Most AI crawlers struggle with, or completely ignore, JavaScript-heavy, client-side rendering (CSR). Executing JavaScript is computationally expensive. If your site relies on the user's browser to build the page, an AI bot may only see a blank template or a "loading" state.

Server-Side Rendering (SSR) is no longer optional for AI visibility. By serving the fully rendered HTML directly from the server, you ensure that even the most basic LLM scraper can ingest 100% of your content instantly.

Technical AI Health Checklist

Audit for Basic Friction: Broken links, slow LCP (Largest Contentful Paint), and mobile-friendliness are significant deterrents. Use tools like Semrush’s Site Audit to locate these "AI Search Health" issues.

Manage robots.txt: Ensure you aren't inadvertently blocking the bots that fuel AI search engines.

Adopt LLMs.txt: This experimental file format is already being honored by platforms like Perplexity and Common Crawl. It acts as a "cheat sheet" for AI bots, telling them exactly which parts of your site contain high-value, citable information and how to interpret it.

Strategy 3: Structure for Machine Readability (Chunking)

LLMs do not process content like humans. Through a process called Retrieval-Augmented Generation (RAG), an AI takes a user's query and searches for the most relevant "chunks" of information across the web. To be the chosen chunk, your content must be optimized for vector embeddings, the mathematical language of AI.

Technical Writing for Vectorization

To improve how an AI parses your content, you must optimize for Subject-Verb Proximity. AI models analyze sentence structure by identifying the relationship between subjects and their actions. The further apart these are, the harder it is for the model to "score" the sentence's relevance accurately.

Avoid Ambiguous Pronouns: In the age of AI, "it," "this," and "they" are your enemies. If you're discussing a "Software-as-a-Service platform," repeat the full noun or a clear synonym rather than using a pronoun.

Maintain Entity Consistency: If you refer to your product as a "Revenue Intelligence Tool" in the intro, don't call it a "Sales Tracking App" in the conclusion. Inconsistency breaks the "entity mapping" the AI is trying to build.

The Three-Sentence Maximum: Keep paragraphs to no more than three sentences. This prevents "contextual drift," where a paragraph starts on one topic but wanders into another, confusing the vector embedding process.

Semantic HTML as a Map

Use H1, H2, and H3 tags as a clear hierarchy of intent. An H1 tells the AI what the page is. An H2 tells it what the "category" of information is. An H3 provides the specific "facet." Never skip levels (e.g., jumping from H1 to H3), as this breaks the logical map the crawler uses to understand your site structure.

Strategy 4: Leverage Brand Signals and Semantic Co-occurrence

AI search engines don't just evaluate your content; they evaluate your brand's reputation and its relationship to specific topics. This is the evolution of E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness).

The Power of Semantic Co-occurrence

LLMs learn by association. If the term "Monday.com" consistently appears in proximity to the phrase "workflow automation" across authoritative sites, the AI builds a strong semantic bond between the two. When a user asks, "What’s the best tool for workflow automation?", the AI doesn't just search for the keywords; it retrieves the brands with the strongest co-occurrence score.

Building Off-Site Signals

In the world of GEO, unlinked brand mentions are a big deal. LLMs build Knowledge Graphs of brands by scanning the entire web, not just following <a> tags.

UGC Platforms: Reddit, Quora, and YouTube are high-exposure platforms for generative engines. Active engagement here, like when the co-founder of Tally (Marie Martens) consistently answers form-builder questions on Reddit, trains the AI to associate that brand with specific value propositions (like "unlimited forms").

Wikipedia: This remains a primary training source for almost every major LLM. A factual, cited Wikipedia entry is perhaps the single strongest "authority signal" you can possess.

Third-Party Citations: Independent reviews on sites like G2 or Trustpilot and mentions in industry news outlets (e.g., TechCrunch) serve as the "social proof" that AI systems use to weight their recommendations.

Strategy 5: Prioritize Content Freshness and Recency

In the high-stakes world of AI discovery, recency is often the ultimate "tiebreaker." If two pages provide equally valid answers, the AI will almost always cite the one that appears most current. In fast-moving industries, a page from 2022 is effectively ancient history, regardless of how many backlinks it has.

The Freshness Workflow

To maintain your "citable" status, you must implement a rigorous update schedule for your "money pages."

dateModified Schema: Use this markup to signal to bots exactly when the content was last revised. This is more important than the "published" date.

Stat Refreshing: Replace any data point older than 18 months. AI search engines are increasingly adept at identifying outdated statistics.

Broken Link Audits: A single broken link in your references can signal "content decay" to a sophisticated LLM, lowering your trust score.

Strategy 6: Differentiate with Proprietary Data and Original Research

AI tools have a built-in bias toward uniqueness. If your article is just a synthesized summary of what already exists on the web, an AI has no reason to cite you, it can just synthesize the information itself. To earn the citation, you must provide the "Information Gain" that the AI cannot find elsewhere.

The 10,000-Query Insight

A massive study of 10,000 real-world queries revealed that pages containing original quotes and statistics had a 30-40% higher visibility rate in AI responses compared to content without them. AI systems are programmed to look for "evidence" to support their claims; providing that evidence makes you a high-value source.

Case Study: Rank Secure’s GEO Victory Baruch Labunski, founder of Rank Secure, demonstrated the power of this approach by achieving a 40% growth in brand citations within just 90 days. He didn't just "fix" existing content; he added 120 new pages specifically targeting "how-to" and "comparison" keywords. By weaving original case studies and proprietary data into these pages, he forced the AI engines to recognize his brand as a unique authority rather than a generic participant.

Types of High-Value Original Content:

Benchmark Studies: Annual reports that set the standard for your industry.

Survey Data: Original insights gleaned from your customer base.

Unique Frameworks: Proprietary methodologies (e.g., the "Answer-First Pattern") that give the AI a new way to explain a concept.

Strategy 7: Build Topic Clusters for "Query Fan Out"

When a user asks a complex question, an AI system often performs a Query Fan Out. This is a process where the AI identifies all the sub-queries required to provide a comprehensive answer. To dominate a topic, you must provide the "Pillar and Cluster" structure that supports this fan-out.

The Digital Concierge Metaphor

Think of an AI search engine as a Digital Concierge. If a guest asks for a "good coffee experience," the concierge doesn't just want a list of cafes. They want to know about the bean types, the roasting process, the seating availability, and the proximity to the guest.

If your website only has a page on "Premium Coffee Beans," you lose. But if you have a pillar page on "Premium Coffee" linked to sub-topic clusters like "Arabica vs. Robusta," "Ethical Sourcing," and "Home Brewing Guides," the AI sees you as a comprehensive authority.

Internal Linking for RAG

Internal links are the "roads" that an AI bot follows during its retrieval phase. Use your Keyword Strategy Builder to identify these clusters. By linking your pillar content to highly specific sub-pages, you make it easy for the AI to "collect" all the necessary components for its final synthesized response, significantly increasing the odds that you are the primary source cited.

Tools of the Trade: Using an AI Visibility Platform

By 2026, manual tracking of AI results will be a fool's errand. AI recommendations are inherently inconsistent; asking ChatGPT the same question 100 times can produce 100 slightly different brand lists. To get a true picture of your performance, you need an ai visibility platform that can monitor millions of prompts across platforms.

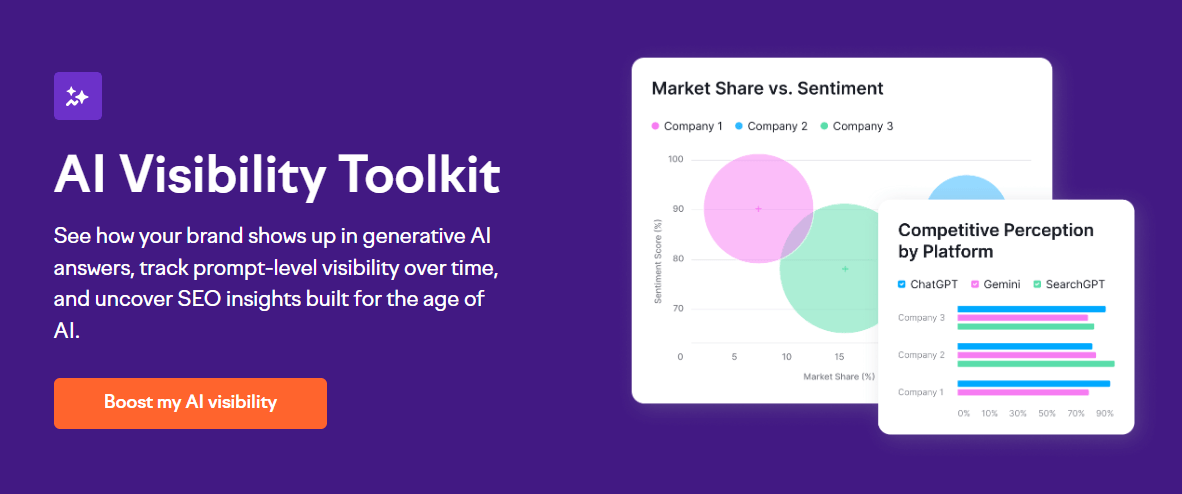

The Semrush AI Visibility Toolkit

A professional-grade ai visibility tool allows you to move beyond "snapshots" and into trend analysis. You should be tracking three key categories:

Share of Voice (SOV): How much real estate do you occupy in ChatGPT vs. Gemini vs. Perplexity? This reveals where your content is resonating and where it's being ignored.

Sentiment Analysis: Is the AI describing your brand as "the affordable option," "the premium choice," or "difficult to use"? Understanding the AI's "opinion" allows you to course-correct your brand messaging.

Source Opportunities: This is the most actionable data point. These are queries where your competitors are being cited, but you are not. This acts as a roadmap for your next content update.

Measuring Success: Metrics That Actually Matter

Traditional KPIs like "Keyword Ranking" are becoming secondary to a new set of metrics designed for the synthesized web.

To measure the success of your GEO efforts, you must track:

AI Mentions: Does your brand appear in the generated text?

AI Citations: Does the answer include a clickable link to your site? (Remember, Google AI Overviews cite Top 10 sources 85.79% of the time, so traditional ranking still helps here).

Position within Response: Are you the first recommendation or an afterthought at the bottom?

AI Share of Voice: Your brand’s presence in a category compared to the total volume of generated responses.

The Monitoring Schedule

Daily: Necessary if you are running an active visibility campaign or launching a new product.

Weekly: The standard for monitoring trends and identifying "Source Opportunities."

Evaluation Prompts: Regularly perform manual sanity checks using these three prompt types:

"What is [Your Brand]?" (Tests for accuracy and sentiment).

"[Your Brand] vs [Top Competitor]" (Tests for positioning).

"Best tools for [Your Category]" (Tests for general visibility and SOV).

Common Obstacles and Frequently Asked Questions

The AI Content Penalty Myth

There is a persistent myth that Google penalizes AI-generated content. Let’s be clear: Google rewards helpfulness, quality, and user value. It does not care if the content was authored by a human, an AI, or a hybrid of both, provided the result is high-quality. Focus on the value, not the tool.

Do I Need to Rewrite Everything?

Start with your "power pages." Identify the queries where AI Overviews already appear frequently (roughly 88% of informational searches) and apply the "Answer-First" and "Chunking" strategies there first.

Platform Overlap Breakdown

Why can't you just optimize for Google? Because the overlap is minimal.

ChatGPT overlap with Google Top 10: 2.1%

Google AI Overviews overlap: 8.3%

Google AI Mode overlap: 15.5%

Perplexity overlap: 32%

Each platform has a different "appetite" for what it considers a trusted source. A multi-platform strategy is the only way to ensure total brand protection.

Frequently Asked Questions (FAQ)

1. Why Is Brand Visibility Important in AI Search Engines?

Brand visibility in AI search is critical because AI platforms are rapidly becoming the primary way users find information and make purchasing decisions.

Massive User Base: ChatGPT has over 900 million weekly users, and Google AI Overviews appear in approximately 88% of informational search queries.

Higher Conversion Rates: Research suggests that visitors coming from AI platforms convert 4.4x better than traditional organic search visitors because they have already completed their research when they arrive.

Business Value: AI search is projected to drive as much business value as traditional search by 2027, eventually surpassing it.

Consideration Set: If your brand is not mentioned in an AI-generated answer, you may remain entirely invisible to potential buyers who are using AI as a "digital concierge" to refine their options.

2. What Strategies Improve Brand Visibility in AI Search Results?

Based on the sources, seven primary strategies can boost your brand's presence in AI search:

Front-Load Content (Answer-First): Open every section with a direct, 40–60 word answer to the heading to make it easy for AI to extract.

Strengthen Technical Foundation: Ensure your site is mobile-friendly, fast, and uses Server-Side Rendering (SSR), as many AI crawlers struggle with JavaScript-heavy content.

Optimize for Extraction (Chunking): Structure pages with clear H2/H3 hierarchies and short paragraphs (max 3 sentences) so AI can easily "chunk" and vectorize your data.

Maintain Content Freshness: Regularly update pages with current statistics and research, as AI tools prioritize recent content as a "tiebreaker" for citations.

Build Semantic Co-occurrence: Get your brand mentioned near relevant keywords on authoritative third-party sites like Wikipedia, Reddit, and LinkedIn to help LLMs associate your brand with specific topics.

Provide Original Information: Include proprietary research, expert quotes, and first-hand case studies. AI systems favor "Information Gain" and unique data that they cannot synthesize from other generic sources.

Create Topic Clusters: Use a "Pillar and Cluster" internal linking structure to support "Query Fan Out," where an AI collects various sub-queries to provide a comprehensive answer.

3. When Should You Start Focusing on AI Search Visibility?

You should start focusing on AI search visibility immediately. This is not a distant prediction but an immediate market transition. Because AI search results are projected to match the business value of traditional search by 2027, brands that wait risk losing their competitive edge in a landscape where organic links are increasingly pushed down by AI Overviews.

4. Where to Begin with Enhancing Brand Visibility?

To begin, you should:

Measure Your Baseline: Use tools like the AI Visibility Toolkit to see how LLMs currently describe your brand and identify "Source Opportunities" where competitors are mentioned but you are not.

Audit Technical AI Health: Check for broken links or crawlability issues in your robots.txt and LLMs.txt files to ensure AI bots can access your content.

Update "Power Pages": Instead of rewriting everything, prioritize your most important pages, those that target high-value informational queries, and apply the "Answer-First" pattern to them.

5. How Does AI Affect Brand Visibility in Search Engines?

AI fundamentally changes the visibility dynamic by moving away from the "Ten Blue Links" toward synthesized responses.

Reduced Overlap: Ranking #1 on Google does not guarantee AI visibility. For example, only 2.1% of ChatGPT citations match URLs on the first page of Google.

Zero-Click Searches: AI often answers a user's question directly on the search page, which can decrease traditional click-through rates.

Agentic Search: The web is moving toward "agentic" systems where AI doesn't just provide links but analyzes data, recommends specific brands, and eventually performs tasks on behalf of the user.

Conclusion: Taking Control of Your AI Future

The rise of the agentic web represents the greatest opportunity for brand growth in a generation. SEO and GEO are not competing philosophies; they are complementary forces. Traditional SEO builds the foundation of authority and ranking, while GEO ensures that your brand is the one the AI chooses to synthesize, cite, and recommend.

The winners of the next decade will be the brands that audit their "AI Search Health" today. By structuring your content for extraction, leveraging original research, and building a clear semantic map of your expertise, you ensure that as search moves from "Ten Blue Links" to a synthesized conversation, your brand remains the loudest voice in the room. The era of the digital concierge is here, make sure they know your name.

Created with © Systeme.io

Disclaimer: This page contains affiliate links. If you purchase through these links, we may earn a commission

at no extra cost to you. We only recommend tools we trust.