Technical SEO in 2026: The AI Readability Crisis & AI Visibility

Technical SEO in 2026: The AI Readability Crisis & AI Visibility

Introduction: The New Invisible Wall

For nearly three decades, technical SEO was defined by a singular, straightforward goal: making sure a browser could render a page so a human could read it, and ensuring a search engine crawler could follow the links. We obsessed over "crawl budgets" for Googlebot and optimized for "Time to Interactive" (TTI) to please our human users. However, as we move through 2026, the landscape has fractured. A new "invisible wall" has emerged, and it’s arguably more dangerous to brand health than any legacy indexing issue.

We are no longer just optimizing for browsers; we are optimizing for Large Language Models (LLMs) and Generative Engine Optimization (GEO). These AI-driven search environments consume data in fundamentally different ways than the deterministic crawlers of the 2010s. Many publishers are waking up to a frustrating, almost haunting reality: their sites rank #1 for high-volume keywords in traditional search, yet they are completely invisible to the AI systems generating direct answers for users in the new AI Overviews.

This is the "AI Readability Crisis." It stems from a technical gap between browser-based rendering and AI crawler discovery. While your site might look pixel-perfect to a marketing director using Chrome, the underlying infrastructure may be indecipherable to the browser-less "machines" that now act as the primary gatekeepers of information. If a machine cannot ingest your data at the raw HTML level, it will not synthesize your expertise. If it cannot synthesize your expertise, it will not cite you.

The core objective of technical SEO has shifted from "being found" to "being understood." It is no longer enough to exist on the web; you must be readable to the systems that synthesize information for trillions of user queries. This article provides a comprehensive, strategist-level blueprint for bridging the gap between your web infrastructure and AI discovery, ensuring your brand is not just indexed, but cited and prioritized by the next generation of search.

The JavaScript Blind Spot: Why AI Can’t See Your Content

The most significant barrier to AI visibility in 2026 is what we’ve termed the "JavaScript (JS) blind spot." For years, web development has leaned heavily into Client-Side Rendering (CSR). Frameworks like React, Vue, and Angular allow for incredibly fluid user experiences by loading a shell of a page and then fetching content via JavaScript. This works beautifully for a user with a multi-core processor and a modern browser. It is a disaster for an LLM scraper.

AI systems often function as "browser-less" or "headless" machines. They are designed to ingest and process information at extreme scale, prioritizing data throughput over rendering fidelity. When a scraper encounters a site built entirely on CSR, it often sees only the "app shell", a skeleton of headers and footers with a giant hole where the valuable content should be. While Googlebot has improved its rendering capabilities over the years, the massive influx of LLM-based bots (from OpenAI, Anthropic, and others) do not always have the resource budget to wait for full "DOM hydration" or to execute complex event listeners.

If your product descriptions, pricing tables, or unique insights only appear after a script executes, there is a high probability that an AI crawler will see a blank page. This delay or total failure in rendering leads to what we call "Time to Discoverable Content" (TTDC) lag. To an AI, if the content isn't in the initial HTML response (the "raw source"), it effectively doesn't exist.

The Machine Rendering Paradox Modern web development prioritizes the "Time to Interactive" (TTI) for human users, the moment a user can click a button. However, AI crawlers are optimized for "Time to Ingestion." When critical content is locked behind JavaScript, you create a paradox: you have built a site that is highly interactive for humans but completely invisible to the very machines that are supposed to send those humans to your site. To an AI, a site that requires JS execution to display its primary value proposition is a site that is essentially "dark."

The technical grit of this problem lies in the "Render-Ingest Gap." In our technical audits, we frequently see sites where the "Raw HTML" is 2KB of script tags, while the "Rendered HTML" is 50KB of rich text. That 48KB delta is the "Invisible Wall." If your expertise is in that delta, the AI will hallucinate an answer or, worse, pull the answer from a competitor whose site provides that information in plain, static HTML.

Infrastructure Over Protocol: The 2026 Hierarchy of Needs

In the rush to stay relevant in the AI era, many technical teams make the mistake of chasing experimental protocols, obscure new "AI-ready" file formats, or proprietary APIs. However, our data at Semrush suggests a different reality: the most successful sites in the AI era are those that prioritize "foundation over protocol."

AI systems do not want novelty; they want semantic clarity and high-speed ingestibility. Clean HTML and logical site architecture have returned as the most critical elements of a technical SEO strategy. Before you worry about how to interact with a specific LLM’s API, you must ensure your site's core infrastructure is accessible to any machine, regardless of its sophistication.

The Hierarchy of AI Readability

To win in 2026, you must build your technical roadmap according to this three-tier hierarchy:

Clean, Static HTML (The Base): This is the bedrock. Moving critical content, claims, data, specifications, and primary arguments, away from JavaScript dependence ensures that even the most basic scraper can consume your message. This often requires a shift toward Server-Side Rendering (SSR) or Static Site Generation (SSG). When the server sends a fully populated HTML document, the AI can tokenize the text immediately without executing a single line of JS.

Consistent Entity Modeling: AI understands the world through entities, distinct people, places, things, and concepts, rather than just keywords. Your site structure should logically reflect these entities. For example, a "Product" shouldn't just be a URL; it should be part of a nested architecture that links it to its "Manufacturer," "Category," and "User Reviews" in a way that allows an AI to build a clear knowledge graph of your expertise.

Robust Structured Data (The Apex): If HTML is the body of your content, Schema is the identity card. It provides the explicit context that machines need to categorize your information without ambiguity. In 2026, Schema is not about "Rich Snippets"; it is about "Entity Disambiguation." It tells the machine: "This is not just a string of text about 'Apple'; it is specifically about 'Apple Inc.' the corporation."

By focusing on these three layers, you move from a reactive "tinkering" mindset to a proactive "infrastructure" mindset. You are building a site that is "correct by design" for any machine that encounters it.

Schema as Machine Identity: Beyond Basic Rich Snippets

In the previous era of SEO, Schema Markup was often viewed as a "nice-to-have" or an "optional boost", a way to get a star rating on a search result or a recipe image. Today, Schema has transitioned into a "machine identity requirement." It is the primary tool for telling an AI exactly who a brand is, what it stands for, and why it is an authority.

The risk of poor Schema implementation in 2026 isn't just "lower rankings"; it is the Unattributed Citation Risk. Imagine your team spends months producing a definitive industry report. An AI processes that report and provides a perfect answer to a user's question. However, because your site lacked clear Organization Schema or used malformed Website Schema, the AI cannot confidently link the data back to your brand. It cites your competitor, who happened to have a cleaner technical foundation, as the source. You provided the value; they got the credit.

Organization Schema: Your Brand's Anchor

Organization Schema is the definitive record of your company's existence in the digital world. In an era of AI hallucinations, LLMs look for "anchors" of truth. By implementing comprehensive Organization Schema, you define your official name, social profiles, parent companies, and core purpose. This ensures that when an AI synthesizes your content, it has a "Machine ID" to attach to that information, reducing the chance of your insights being attributed to a third-party aggregator or a rival brand.

Website Schema: The Navigational Blueprint

Website Schema provides the blueprint of your site’s internal hierarchy and search capabilities. It informs AI systems about your site's structure, allowing them to understand the relationship between your homepage and your deep-link resources. This helps AI-powered search engines provide more accurate "deep citations," directing users to the specific technical spec sheet or whitepaper they need, rather than just dumping them on your homepage.

In 2026, a strategist must treat Schema as a "deterministic layer" that sits on top of the "probabilistic layer" of LLM processing. While we cannot always control how an AI interprets our prose, we can absolutely control the structured data that defines our identity.

The Diagnostic Workflow: Auditing for AI Readability

To bridge the gap between your infrastructure and AI discovery, you must move from theory to high-precision diagnostics. You cannot fix what you cannot see. This requires a technical audit process that looks specifically at the "Render-Ingest Gap" using the Semrush Site Audit tool.

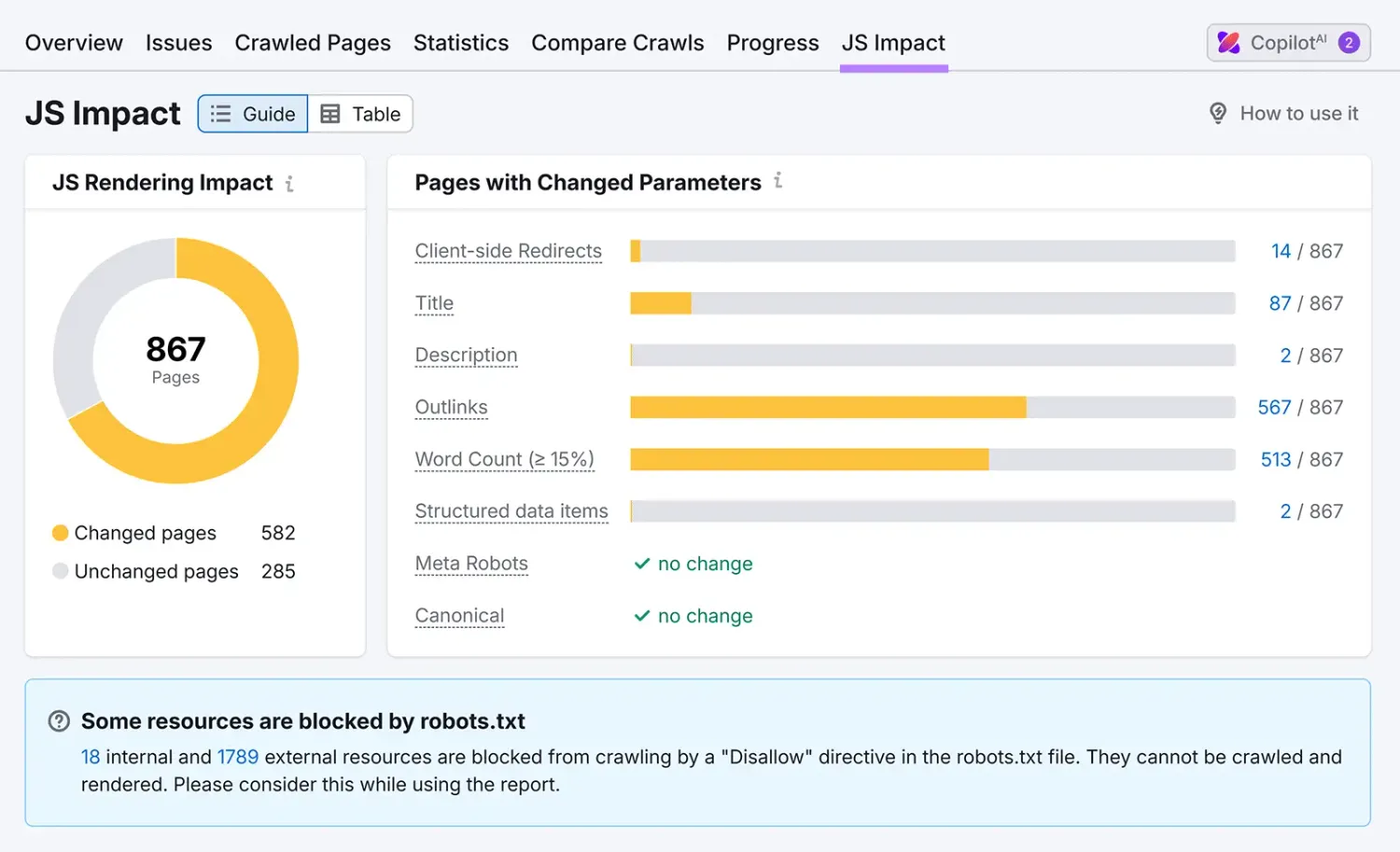

The most critical feature in this workflow is the JS Impact Report. As a technical strategist, your goal is to minimize the "Delta" between the raw HTML and the rendered page. When you run a Site Audit, the tool compares what a crawler sees initially (the raw code) against what is visible after JavaScript execution. If your core "value" content (the text that answers a user's "why") only appears in the rendered version, you have identified a primary AI visibility barrier.

The Technical AI Barrier Matrix

The "Senior Strategist" workflow involves looking at the JS Impact Report and asking: "If I were a browser-less scraper with only 200ms to look at this page, would I walk away with the answer?" If the answer is no, the technical team must prioritize Server-Side Rendering for that content.

Closing the Loop: Measuring AI Visibility and Citations

Technical fixes are only half the battle; the other half is validation. In the era of Semrush One, we finally have the ability to connect the "plumbing" of technical SEO directly to the "outcomes" of AI discovery. We can "close the loop" by seeing how a specific fix, like moving a data table from a JS-react component to a static HTML <table>, influences real-world AI mentions.

Once you have removed JavaScript barriers or updated your Organization Schema, you must monitor the following metrics to validate the infrastructure change:

AI Overview Tracker

This tool monitors whether your pages are appearing in Google’s AI-generated answers. It is the ultimate litmus test for technical health. If you see a page that ranks #3 in traditional search but #0 in AI Overviews, you likely have a rendering or schema gap. By tracking the "AI Overview Presence" before and after a technical update, you can prove the ROI of moving to SSR.

AI Citations

This tool tracks when and where various AI platforms (beyond just Google) cite your content as a primary source. This is the "Citation Gap" analysis. If you find that a competitor is being cited for a topic you've covered more extensively, go back to the Site Audit. You will likely find a "Broken Internal Link" or a "Rendering Issue" that prevented the AI from seeing your superior data. Fixing that technical broken link directly leads to a "Citation Gain."

Integrating Traditional SEO with AI Discovery

The goal of modern technical SEO is "dual visibility." We are living in a hybrid world where traditional search results and AI-generated answers coexist. You should not sacrifice legacy organic traffic in a desperate bid for AI visibility. Instead, use the Semrush One suite to manage both simultaneously.

By using Position Tracking alongside the AI Visibility Index, you can ensure that your site is winning in both environments. This is where Generative Engine Optimization (GEO) meets traditional SEO. GEO is essentially the practice of making your site's technical and content architecture "optimal" for LLM ingestion.

Key KPIs for Dual Visibility Practitioners

Traditional Keyword Growth: Monitored via Position Tracking to ensure core organic traffic remains stable or grows during infrastructure changes.

AI Citation Frequency: Tracking how often LLMs refer to your domain as a source of truth.

Rendering Parity Score: A custom metric derived from the Site Audit JS Impact Report, aiming to reduce the difference between raw HTML and rendered content to <5%.

Competitor AI Gap: Using Organic Research and the AI Visibility Index to see if competitors are gaining ground in AI-generated answers. If their traditional rankings are flat but their AI visibility is rising, they have likely optimized their infrastructure for AI readability.

The Recovery Pattern: A Week-by-Week Roadmap

Practitioners who follow a structured "Recovery Pattern" often report major visibility gains in both traditional and AI search within just a few weeks. This isn't about "gaming" a system; it's about cleaning up a messy infrastructure to let the value shine through.

The 30-Day Technical Recovery Plan

Week 1: The Rendering Audit. Use the Semrush Site Audit and the JS Impact Report to identify "Blind Spot" pages. Focus on high-value product pages and cornerstone blog posts.

Week 2: The Critical Content Migration. Work with your dev team to migrate "critical content" (the meat of the page) from client-side JS to static HTML. Ensure that headers, primary claims, and data tables are present in the raw source code.

Week 3: The Machine Identity Implementation. Deploy comprehensive Organization and Website Schema. Use the Semrush "Structured Data" check to ensure there are zero syntax errors. This ensures that when the AI finds your now-visible content, it knows exactly who to credit.

Week 4: Validation and GEO Monitoring. Use the AI Overview Tracker and AI Citations tool to measure discovery gains. Simultaneously, use Position Tracking to ensure your traditional search rankings are recovering or improving as a result of a faster, cleaner site structure.

Benchmarking Against the Competition

In the AI era, technical health is relative. You are competing for a limited number of "Citation Slots" in an AI-generated answer. To understand where you stand, you must benchmark your site against competitors who are already winning the "Readability War."

The AI Visibility Index is the crucial tool for this stage. It allows you to identify which brands in your niche are currently leading in AI search features. Once you identify a leader, use Organic Research to look at their technical history. Did they recently undergo a site migration? Are they using SSR? Do they have 100% Schema coverage?

By combining the AI Visibility Index with competitive technical audits, you can determine if a rival’s dominance is due to their brand name or simply a superior technical infrastructure that makes their content more "digestible" for LLMs. If it's the latter, you have a clear technical roadmap to overtake them.

Conclusion: The Future of Technical SEO

The transition we are witnessing in 2026 is a fundamental shift from "SEO for humans" to "SEO for machines that serve humans." The traditional principles of crawlability and indexability have not disappeared; they have simply become more rigorous and less forgiving.

Technical SEO is no longer a "marketing" task; it is an infrastructure challenge. If your site is built on a foundation of clean HTML, transparent entity modeling, and robust structured data, your brand will thrive and be cited across the AI ecosystem. If you continue to hide your value behind layers of fragile JavaScript and ambiguous, non-semantic structures, you will remain on the wrong side of the invisible wall.

Success in this new landscape starts with an audit, not a theory. Stop guessing why your brand is being left out of the AI conversation. Use Site Audit, AI Overview Tracker, and AI Citations to diagnose your rendering gaps and start your recovery today. The future of search belongs to those who are visible to the machines that lead the way.

Created with © Systeme.io

Disclaimer: This page contains affiliate links. If you purchase through these links, we may earn a commission

at no extra cost to you. We only recommend tools we trust.