How to Implement AI in Your SEO Strategy: Step-by-Step Guide!

How to Implement AI in Your SEO Strategy: Step-by-Step Guide!

Introduction: The 2026 Paradigm Shift

As we navigate the search landscape of 2026, the traditional search engine results page (SERP) has been fundamentally disrupted. We have moved beyond the "list of links" into a distributed network of AI-generated answers, agentic assistants, and conversational interfaces. In this "Search Everywhere" reality, the dominant discovery layer is "AI Mode," where information is interpreted and filtered before the user ever sees a browser tab.

The core objective has shifted: we are pivoting from visibility to eligibility. Traditional ranking is being superseded by "citation share." Success is no longer about holding "position one" for a keyword; it is about being the synthesized "truth" that an AI model presents to the user. For informational queries, the "Web Guide" has become the default SERP format, blending traditional search logic with generative "fan-outs" that anticipate the user's entire journey.

State of the Industry Strategic Hook: Data from Semrush confirms that the average AI search visitor is now worth 4.4x more than a traditional organic search visitor due to higher intent and pre-qualification. Projections indicate that AI search traffic, currently growing at 527% year-over-year, will surpass traditional search traffic by 2028.

Phase I: Predictive Research and Intent Modeling

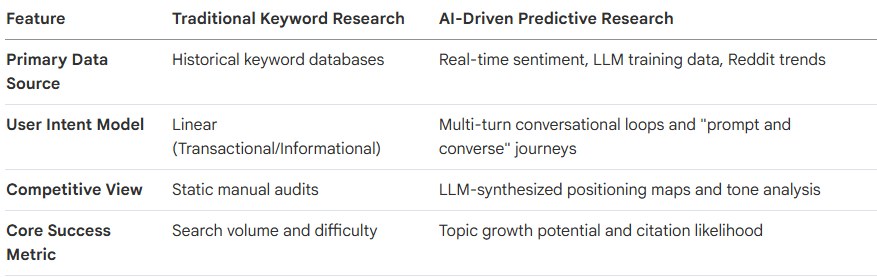

In 2026, keyword research has evolved into Relevance Engineering. We no longer target isolated strings; we optimize for the "Query Fan-out", the web of natural language variations and follow-up constraints triggered by a central intent.

Traditional Keyword Research vs. AI-Driven Predictive Research

The 4-Step Persona-Driven Keyword Universe

Extract Latent Pain Points: Use LLMs (ChatGPT/Claude) to ingest competitor content and customer support logs to identify the specific constraints users face.

Map Intent Clusters: Move from broad terms to context-rich queries. Instead of "CRM pricing," target: "Best CRM for a 50-person agency with Salesforce integration under $150/user."

Model AI Grounding (Technical Pathway): Use the Google Gemini API or the ChatGPT Developer Console (search_prob and search_model_queries) to identify which prompts trigger real-time web searches ("grounding"). Focus your efforts on these grounded prompts.

Optimize for Resonance: Align assets with specific audience interaction patterns rather than focusing on keyword density.

Phase II: Establishing Technical "Vector Index Hygiene"

Technical SEO is now the "translation layer" for AI systems. To win in the era of In-context Ranking (ICR), your site must be machine-readable, not just crawlable.

Technical Checklist for Agentic Readiness

[ ] Implement Schema.org Semantic Entities: Explicitly define Organizations, Persons, and Products to build a digital knowledge graph for AI ingestion.

[ ] Optimize for "Agentic Speed": Achieve load times of <300ms. AI agents prioritize high-performance, plain-text readiness and often skip non-rendering JavaScript.

[ ] Parameter Cleanliness: Ensure 95% of requests are to URLs without parameters to maximize the crawl budget for AI agents and prevent parameter bloat.

[ ] Configure llms.txt: Place this file at the root domain to provide a prioritized, machine-readable table of contents for Large Language Models.

[ ] Update AI-Specific Crawl Rules: Configure robots.txt to allow primary AI bots.

Primary AI Crawlers to Prioritize:

GPTBot (OpenAI): Updates ChatGPT’s retrieval-augmented generation (RAG) datasets.

Google-Extended: Powers the generative "AI Mode" and Gemini-driven Overviews.

PerplexityBot: Indexes content for real-time citations and answer generation.

Phase III: Building Defensible Content Assets

"Average" content is dead. AI can generate summaries; it only cites content that provides Information Gain, proprietary insights it cannot replicate. In 2026, the internet adopts a "Machine-Shaped Voice": concise, modular, and focused on extractable meaning.

The "Seen and Trusted" Framework

"Seen": Achieved through the Vector Index Hygiene and technical speed established in Phase II.

"Trusted": Built via the third-party validation and reputation signals detailed in Phase V.

Defensible Asset Pillars

Proprietary Data (H3): Use first-party benchmarks, original surveys, and internal customer transformation data that LLMs cannot scrap from the public web.

Novel Frameworks (H3): Develop structured, named processes. Unique frameworks provide intellectual property that machines can reference by name.

Expert-Led Insights (H3): Infuse content with "Atomic Claims", concise, independent, and declarative statements that are designed to survive being paraphrased by AI without losing clarity.

Writing for Ingestion

Approximately 70% of users scan only the first third of an AI response. To ensure your brand is the cited source, provide your insight-led summary in the first 30% of the content. Use a modular, declarative structure that makes your data "citation-ready" for RAG systems.

Phase IV: Optimizing for the Agentic Era

Autonomous AI agents now drive roughly 33% of search activity, performing tasks like product comparison and booking on behalf of users.

Agentic Commerce Protocol (ACP): Structure your e-commerce feeds to allow AI assistants to facilitate direct, zero-click transactions.

Media Control Protocol (MCP): For media brands, use MCP to ensure agents can surface and play specific files across smart devices and IoT layers.

API-Ready Infrastructure: For SaaS and data-heavy sites, provide public APIs or clean RSS feeds. Agents prefer structured data over parsing complex HTML.

Non-Rendering Scannability: Ensure your site uses clean HTML5 and logical H1-H6 hierarchies. AI agents often ignore persuasion-heavy design and focus on machine-readable factual precision.

Phase V: Brand Authority and "Reputation Engineering"

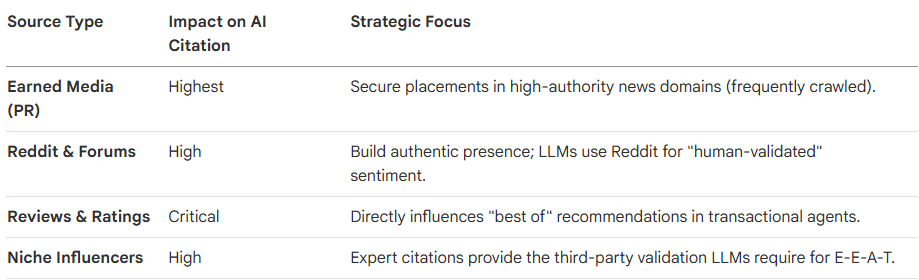

In 2026, reputation is your data record. AI systems assemble brand understanding from fragmented external signals to decide which brand is the "safest" recommendation. Your goal is Influence Optimization: shaping the informational environment so machines can only understand you in one specific way.

The Trust Signal Matrix

Phase VI: The AI SEO Toolstack for 2026

Modern visibility requires tools that track your presence within the AI discovery layer rather than just counting blue links.

Semrush AI Visibility Toolkit: Benchmarks your "AI Visibility Score" and identifies gaps where competitors are being cited instead of you.

Ahrefs Brand Radar: Monitors brand mentions specifically in Reddit threads and Video content, identifying how your brand is perceived in community-led ecosystems.

BrightEdge Instant: Uses AI to identify 100x more keyword variations by mapping real-time topic clusters.

Frase SEO AI Agent: Automates SERP research and creates citation-optimized content briefs via natural language prompts.

Surfer AI Tracker: Monitors citation frequency across AI engines and provides source transparency for your branded mentions.

Phase VII: Measuring Success with New KPIs

Traditional vanity metrics like "Total Keyword Rankings" are obsolete in a zero-click, AI-mediated world. Track these Five Essential AI Search Metrics:

AI Presence Rate: The % of target intent clusters where your brand appears in AI Mode/Overviews.

Citation Authority: The frequency with which your brand is cited as the primary or recommended source within an answer.

Share of AI Conversation: Your "semantic real estate" within conversational threads compared to direct competitors.

Prompt Effectiveness: A measure of how well your content answers natural-language prompts in LLM grounding queries.

Response-to-Conversion Velocity: Tracking how quickly AI-influenced prospects move from discovery to purchase.

Frequently Asked Questions

Integrating AI into your SEO strategy offers a transformative shift in how businesses achieve online visibility and engage with modern searchers.

What Are the Key Benefits of AI in SEO?

Using AI in SEO provides immense efficiency and scale, allowing teams to automate time-consuming tasks like keyword clustering, technical audits, and content briefing in minutes. AI-powered systems deliver smarter insights by identifying patterns and keyword gaps that humans often miss, enabling you to capture rising trends before they peak. Furthermore, AI search visitors are remarkably valuable, often worth up to 4.4x more than traditional organic visitors due to their higher intent and lower bounce rates. Businesses adopting these technologies report a higher return on investment (ROI), with nearly 70% of companies seeing better returns after integrating AI into their workflows. Ultimately, AI allows brands to earn citations in AI Overviews, which can increase click-through rates and establish the brand as a primary source of truth.

How Does AI Change the SEO Landscape?

The fundamental metric of success is shifting from "ranking" to "citing," where being mentioned inside an AI-generated answer often matters more than holding a top organic position. Search is no longer confined to a single results page but has become a distributed network of AI answers, agents, and conversational interfaces across platforms like ChatGPT, Reddit, and YouTube. This "Search Everywhere" reality means that the internet's narrative voice is becoming more concise, declarative, and modular to remain "machine-readable" for AI summarization. Additionally, the rise of AI agents introduces a new type of visitor that browses sites on behalf of humans, requiring technical SEO to prioritize schema and factual precision over persuasive design. This transition has also accelerated zero-click search, with roughly 60% of traditional searches now yielding no clicks because the AI provides the answer directly on the results page.

Why Should You Adopt AI Strategies for SEO?

Adopting AI strategies is essential for survival in a competitive landscape where traditional SERPs are rapidly being replaced by "AI Mode". Early adopters gain a significant competitive edge by securing "citation share" before their niches become saturated with AI-generated content. As users transition to asking longer and more complex queries, only AI-optimized content can survive being rephrased by machines without losing its core meaning. Furthermore, brand trust and reputation have become core ranking signals; AI models prioritize content from sources they can verify through social mentions, reviews, and expert citations. Ignoring AI strategies risks making your brand invisible to the next generation of users, particularly Gen Z, who increasingly use AI chatbots as their primary discovery layer.

When Is the Right Time to Implement AI in Your SEO?

The right time to implement AI is immediately, as the transformation of search has already accelerated past the point of no return. Sources indicate that 2026 is a year of refinement, where short-term exploitative tactics are being squeezed out in favor of "reputation engineering". Organizations should follow a structured roadmap, starting with a discovery phase to define business problems, moving into a pilot program to validate accuracy, and eventually scaling to full enterprise integration. Delaying implementation allows competitors to build the topical authority and citation stability required to dominate future search results.

Where Can You Find the Best AI Tools for SEO?

The best tools for AI SEO are categorized by their specific functions within the modern workflow:

Comprehensive Visibility & Research: Semrush offers an AI Visibility Toolkit for tracking brand mentions in LLMs, while Ahrefs provides "Brand Radar" to monitor AI search presence.

Content Optimization: Surfer SEO, Clearscope, and Frase (which features an autonomous AI Agent) are industry standards for semantic optimization and content briefing.

Technical Automation: OTTO SEO and SearchPilot automate technical fixes and A/B testing, while ContentKing provides real-time monitoring to protect against technical errors that could block AI crawlers.

Platform-Specific Insights: Bing Webmaster Tools now includes an "AI Performance" dashboard specifically to track citations across Microsoft Copilot and Bing AI summaries.

Conclusion: The Implementation Roadmap

Transitioning to AI SEO requires moving from isolated pilots to enterprise-wide adoption, while maintaining a Human-in-the-Loop requirement to ensure factual grounding and brand voice consistency.

30-60-90 Day Implementation Plan

Days 1–30 (Discovery & Pilot): Define business outcomes over rankings. Build a persona-driven keyword universe. Launch a pilot project using the Information Gain framework to validate citation share.

Days 31–60 (Technical & Regulatory Compliance): Establish "Vector Index Hygiene." Achieve <300ms speed. Activate Audit Logs and ensure all AI-ready content is grounded in approved, authoritative sources to meet regulatory trust standards.

Days 61–90 (Scale & Automation): Integrate agentic SEO tools (e.g., Frase/OTTO) to automate technical fixes. Scale your content moat through expert-led research and original data assets.

Search is no longer a destination; it is an operating reality that exists everywhere. Success in 2026 belongs to the brands that provide the raw, authoritative insights AI systems need to answer the world's questions.

Created with © Systeme.io

Disclaimer: This page contains affiliate links. If you purchase through these links, we may earn a commission

at no extra cost to you. We only recommend tools we trust.